This blog gives you some reflections on predictive modeling and human interaction.

The prediction…

The nice thing of predictive modeling is that it gives you possible answers, which you could use to define you or your customers’ actions. You can classify things or trying to predict numbers, like your sales. Another nice thing is that you can retrain your models over time to get -hopefully, but not guaranteed- better results.

But. This logic might not always be true. Let’s reflect on that.

Let’s start with a simple classification task. For example, you want to classify your customers into normal and high-potential customers. It would be great to detect which customers could be flagged as high-potential, so you could spend more time with them. Your assumption would be that those flagged customers will turn out to be high-potential customers. The more you learn about your customers, the better you might be able to make this classification. So far, so good.

…and the interaction

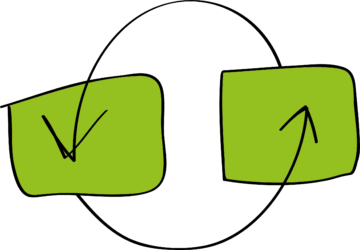

But what if you would use motion sensors in an office building and use that information to predict whether it will be crowded on a specific day, to inform people whether they can work in tranquility or not. The thing is, that when people start acting on your predictions, your model might eventually fail. Because when you predict that it will be quiet, people might come to the office, so it won’t be quiet anymore. And the other way around: when you predict that it will be crowded, people might work from other locations.

Or what if it comes to predictive maintenance: you build a model to predict possible failure of a machine. As soon as it is flagged to fail, you’re fixing it, so it won’t fail. And there you go, the machine that was flagged to fail, didn’t fail, because you fixed it.

Our resolution for 2017 would be to find a better solution to this kind of predictive modeling questions.

What about you, how would you tackle this?